This aggregation problem is common to lots of other industries, as we saw before, Real-estate, Travel, News, Data-analysis.Īs soon as an industry has a web presence and is really fragmentated into tens or hundreds of websites, there is an "aggregation opportunity" in it. The value proposition here is clear, there are hundreds if not thousands of job plateforms, applicants need to create as many profiles on these websites for their job search and Jobijoba provides an easy way to visualize everything in one place. is a French/European startup running a plateform that aggregates job listing from multiple job search engines like Monster, CareerBuilder and multiple "local" job websites. It allows publishers to understand what underlying topics the audiance likes or dislikes. Its plateform crawls the entire publisher website to extract all posts (text, meta-data.) and perform Natural Language Processing to categorize the key topics/metrics. Parse.ly is a startup providing analytics for publishers. The drawback of course, is that each time a bank changes its website (even a simple change in the HTML), the robots will have to be changed as well. That's a good example of how useful and powerful web scraping is. With this process, Mint is able to support any bank, regardless of the existance of an API, and no matter what backend/frontend technology the bank uses. For each bank account, extract all the bank account operations and save it intothe Mint back-end.Fill the login form with the user's credentials.So when an API is not available, is still able to extract the bank account operations.Ī client provides his bank account credentials (user ID and password), and Mint robots use web scraping to do several things : It's a classic problem we discussed earlier, some banks have an API for this, others do not. uses web scraping to perform bank account aggregation for its clients. is a personnal finance management service, it allows you to track the bank accounts you have in different banks in a centralized way, and many different things. Journalism : also called "Data-journalism"Īs you can see, there are many use cases to web scraping.Banks : bank account aggregation (like Mint and other similar apps).

E-commerce, to monitor competitor prices.The travel industry (flight/hotels prices comparators).News portals : to aggregate articles from different datasources : Reddit / Forums / Twitter / specific news websites.Here are some industries where webscraping is being used : Web scraping use casesĪlmost everything can be extracted from HTML, the only information that is "difficult" to extract is inside images or other medias. The good news is : almost everything that you can see in your browser can be scraped. The second issue with APIs is that sometimes there are rate limits (you are only allowed to call a certain endpoint X times per day/hour), and the third issue is that the data can be incomplete. Building an API can be a huge cost for companies, you have to ship it, test it, handle versioning, create the documentation, there are infrastructure costs, engineering costs etc. There is no need to scrape a website to fetch this information since there are lots of APIs that can give you a well formated data :ĪPIs are generally easier to use, the problem is that lots of websites don't offer any API. For example, let's say you want to know the real time price of the Ethereum cryptocurrency in your code.

The API consists of a set of HTTP requests, and generally responds with JSON or XML format. When a website wants to expose data/features to the developper community, they will generally build an API( Application Programming Interface). It can be done manually, but generally this term refers to the automated process of downloading the HTML content of a page, parsing/extracting the data, and saving it into a database for further analysis or use.

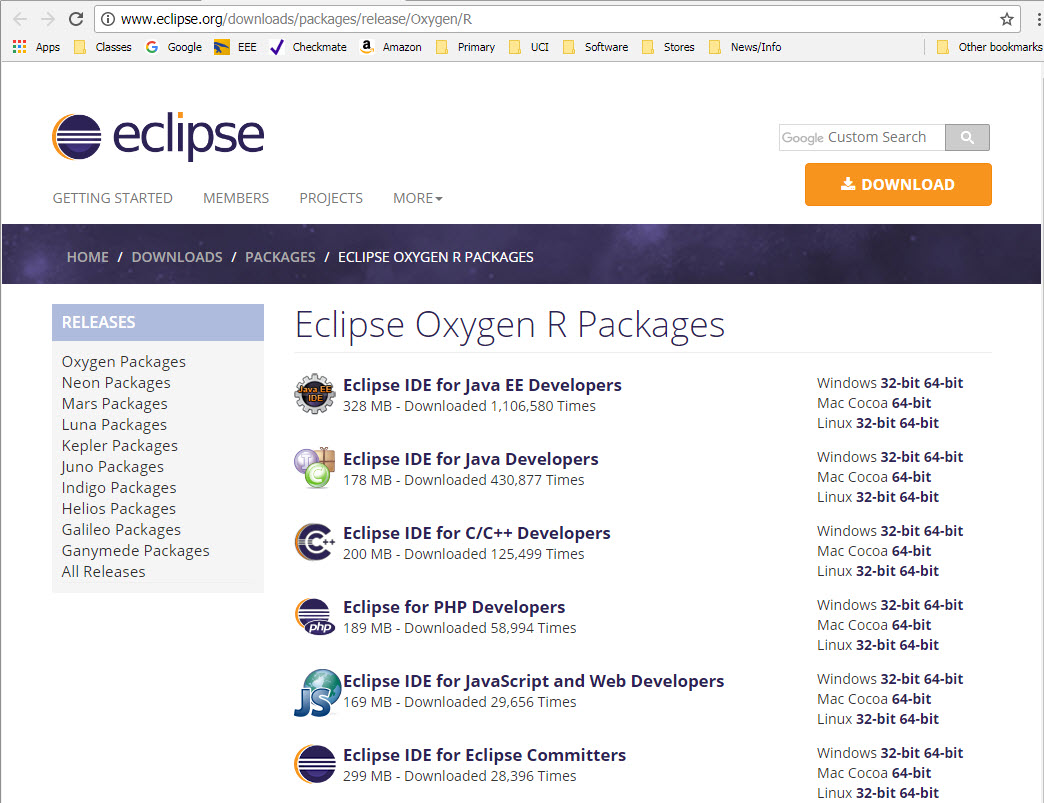

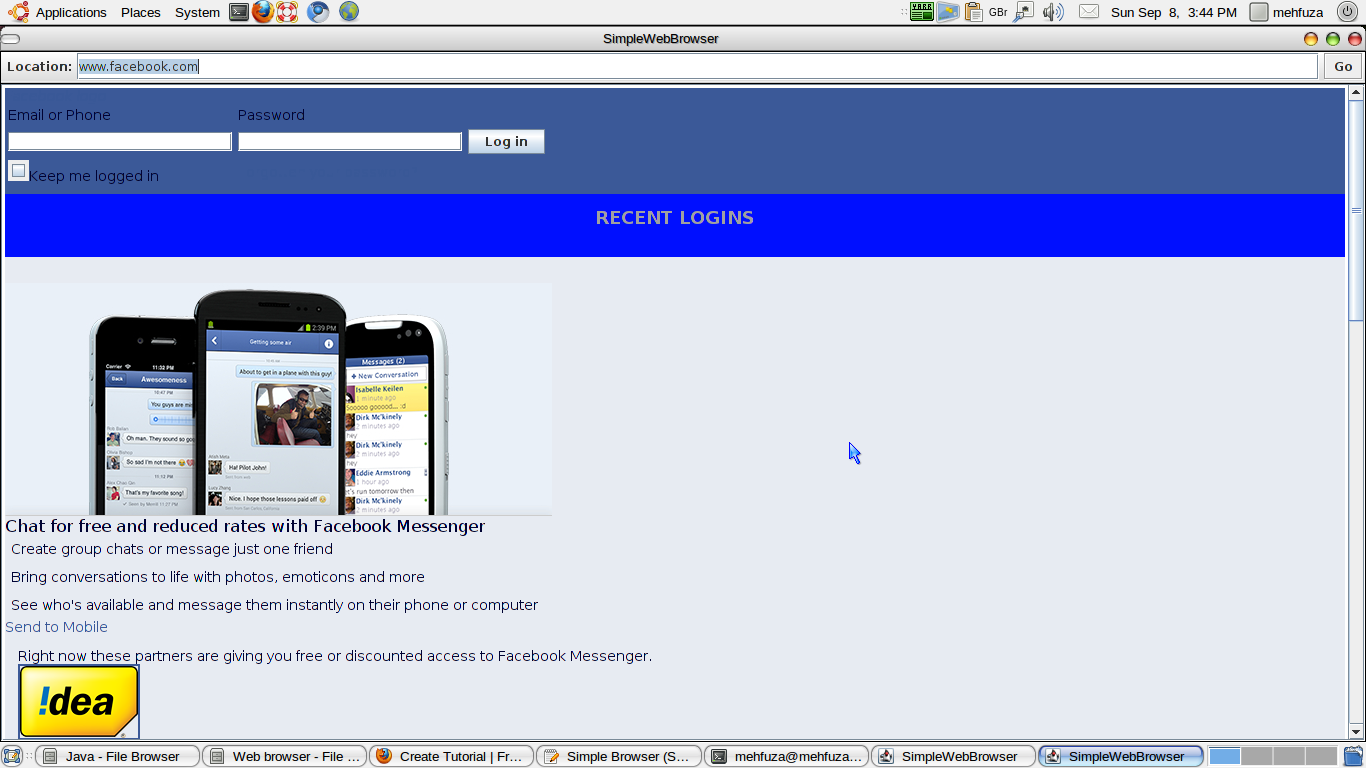

#JAVA DOWNLOAD WEB PAGE CODE#

Web scraping or crawling is the act of fetching data from a third party website by downloading and parsing the HTML code to extract the data you want.

#JAVA DOWNLOAD WEB PAGE PDF#

You don't have to give us your email to download to eBook, because like you, we hate that: DIRECT PDF VERSION.įeel free to distribute it, but please include a link to the original content (this page).

#JAVA DOWNLOAD WEB PAGE FOR FREE#

We've decided to republish it for free on our website. This guide was originally written in 2018, and published here.